Docker monitoring 101

Docker has been a beneficial platform for developers who need to work with multiple containers at once. It is not just the cost benefits that created an interest in Docker among organizations, but also the flexibility of enabling applications to run in any cloud infrastructure. The layers built around Docker make it easy to move and scale these containers without intervention and help build micro-services made up of many containers.

The developers found the software highly useful, while the admins eventually understood that the monitoring could be a limitation because of the layers used around the containers. This makes it a little difficult for the admins to decipher the performance and the functions of their Docker environments.

Let’s first dig deeper into the issues which complicate Docker monitoring.

Challenges in Docker monitoring

Here are the major challenges that an IT admin faces, while monitoring Docker environment.

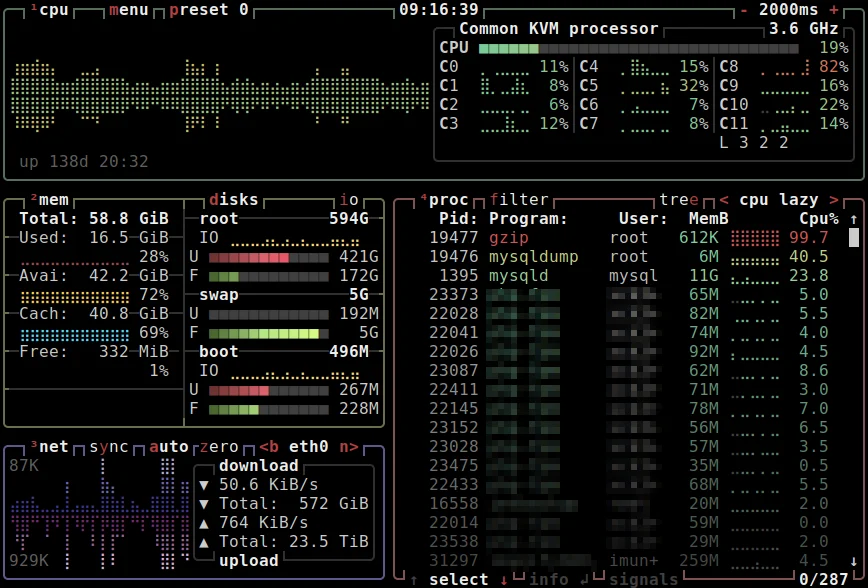

Resource management: If one container uses the lion’s share of the resources, that might lead to the underperformance of the rest. Traditional monitoring tools tend to report the resource usage of each container. Going through them manually puts more on the admin’s plate, and makes it harder to locate the root cause of issues like slowdown and insufficient memory, affecting both container performance and end user experience.

Transaction tracing: IT admins need to run after each transaction in each container when an issue arises. As each container has its own library, log, and application code running inside it, a lot of transactions among the containers occur simultaneously, and tracking every transaction is often difficult. If a slow transaction is not reported promptly, a small crack might lead to a bigger issue and affect the performance of the entire system.

Multiple layers in the infrastructure: Containers add layers to your infrastructure, which requires monitoring tools to automatically discover all the running containers in different layers. This makes it difficult to track the changes in the container deployments in real time.

Features to look out for in a Docker monitoring tool

Here are the key features to look out for, while opting for a monitoring tool for your Docker environment.

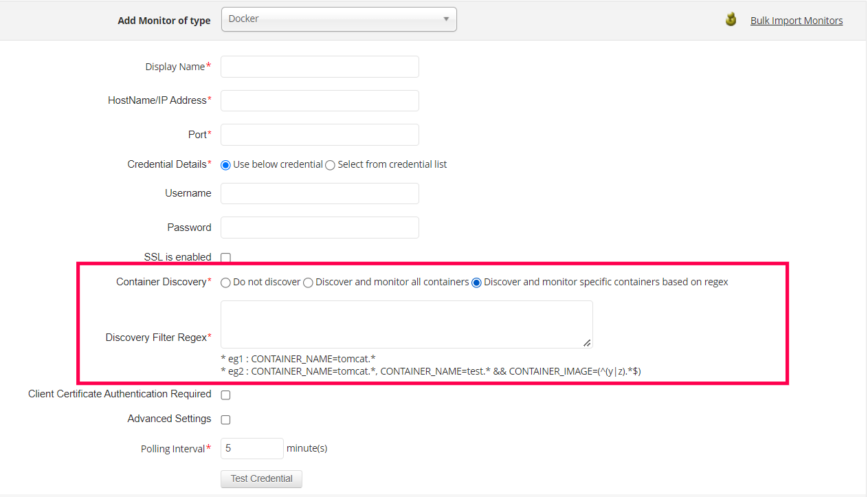

Automatic container discovery: The dynamic nature of the containerized environment is prone to constant changes. Administrators need to be informed about the “what’s” and the “where’s” of running elements and the inactive elements in their containerized environment from time to time. But if done manually, tracking the dependencies and transactions between the containers might cause issues due to blind spots.

Auto-discovery of the services makes it easier for admins who are tasked with the challenging job of reviewing the layers of their Docker environment.

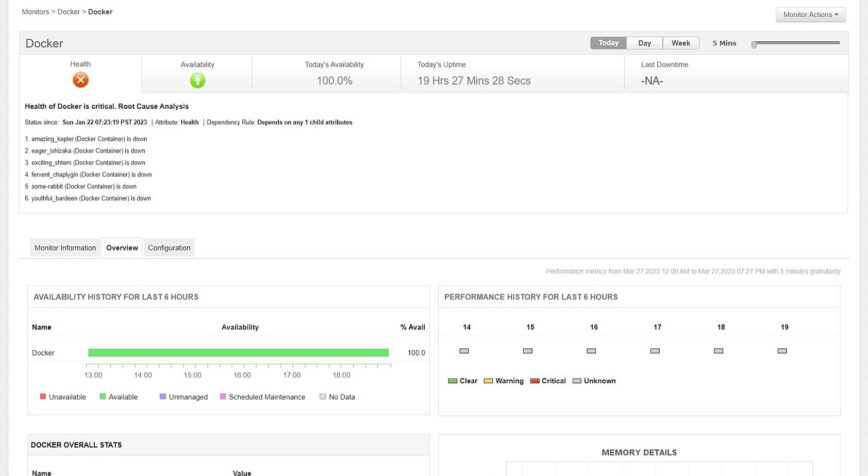

Deeper visibility into the environment: Docker hosts need constant monitoring for the wide range of performance metrics. The complex and dynamic structures of the Docker container requires monitoring across the full stack to track application level metrics. Administrators need to have visibility into every nook of the infrastructure to trace every KPI and ensure optimal performance.

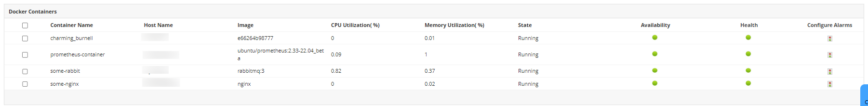

As resources are dynamically allocated in Docker, gaining visibility deeper under the layers is a challenge that can be overcome by using an extensive monitoring platform. The application codes need to be analyzed thoroughly to identify erroneous and time-consuming algorithms. Key performance metrics like memory usage, network i/o stats, and CPU utilization must be tracked per container in real time.

A monitoring tool that provides transparent and holistic insights about the required KPIs is necessary for organizations that employ Docker.

Tracking traffic and memory usage at container-level: Query and data requests must be constantly tracked to avoid bottlenecks. If not, when there is a situation where the containers function in unusual traffic, and more than they are employed to handle, it might cause performance slowdowns.

Memory usage must be analyzed from container to container so that the admin can identify the containers consuming more memory than they are allocated.

Forecasting performance: Resources like CPU utilization and disk space are tracked day to day. But an admin needs to understand resource utilization trends in the system, and evaluate the necessity of resources with respect to the expanding infrastructure of Docker. That is when forecasting enters the game. The performance metrics and the respective reports must be compared day to day, and the trends must be tracked based on time periods.

Forecasting performance trends of required elements like disk operations, crashed containers, and packets based on the generated reports helps with capacity planning and making well-informed decisions.

Smart alerting: To ensure smooth performance, in-time identification of a threshold breach is a must. But the dynamic nature of Docker causes the optimal values of performance metrics to vary. Machine learning-based alerting systems could be a great help in auto-calculating the optimal values for metrics in real-time and preventing false alarms. Automating corrective actions helps eliminate repetitive tasks, thereby improving resolution time and efficiency.

As monitoring is not just about what you observe, you need to be able to interact with the infrastructure and take actions immediately if anything goes wrong. A console that enables you to make changes to the infrastructure, like turning a container on and off whenever required while monitoring, takes almost half the weight off the admins’ shoulders.

If an organization is working with Docker and needs an overall monitoring tool for its software, these features should be taken into consideration when choosing one.

ManageEngine Applications Manager offers a centralized interface between the administrator and the Docker environment, along with a wide range of features that improve performance and efficiency. This support extends to over 150 technologies like Kubernetes, AWS, Hyper-V and many more.

Explore ManageEngine Applications Manager’s free 30-day trial version or schedule a demo with solution experts to discover more about monitoring your Docker infrastructure.

I’d really like to hear some discussion on the way for IT admins to calm their cyber security counterparts with a beefy application that can roam the Kubernetes wasteland and spy on everything and send logs to splunk from …uh…everything and report on security incidents so that they’d be calm and let our containers do what they do best - and not just Docker, but Openshift as well.