How to Install Docker on Linux and Run Your First Container

Docker is a platform for packaging and running applications in isolated units called containers. Each container bundles an application together with its libraries and dependencies, sharing the host’s Linux kernel instead of a full separate OS. If you are looking to install Docker on Linux and run your first container, this guide covers everything you need.

In practice, Docker containers are much lighter than traditional virtual machines, yet they allow you to deploy services (websites, databases, media servers, etc.) on any Linux host. For example, a Linux server can run a personal website, a media server, and cloud storage all in Docker containers. If you are coming from a VM-based workflow, containers offer the same isolation with far less overhead. For a deeper look at choosing the right base system, see Choosing the Best Linux Server Distro.

This guide walks through installing Docker on Ubuntu 24.04 LTS (server or VM) and starting your first container. These steps also work on similar distros like Debian 12/13, Ubuntu 22.04, and other Debian-based systems.

Install Docker on Ubuntu 24.04 LTS

Remove Conflicting Packages

Before installing Docker Engine, remove any old or conflicting packages that may have been installed from default Ubuntu repositories. These older packages (docker.io, podman-docker, etc.) can conflict with the official Docker Engine:

for pkg in docker.io docker-doc docker-compose docker-compose-v2 podman-docker containerd runc; do sudo apt remove -y $pkg 2>/dev/null done

If none of these are installed, the command will simply skip them.

Install Prerequisites and Add Docker’s Repository

Update your package index and install the required tools:

sudo apt update sudo apt install -y ca-certificates curl

Next, add Docker’s official APT repository. This lets you install the latest Docker Engine directly from Docker. Run:

# Create the keyrings directory sudo install -m 0755 -d /etc/apt/keyrings # Download Docker's official GPG key sudo curl -fsSL https://download.docker.com/linux/ubuntu/gpg -o /etc/apt/keyrings/docker.asc sudo chmod a+r /etc/apt/keyrings/docker.asc # Add the Docker repository to APT sources echo \ "deb [arch=$(dpkg --print-architecture) signed-by=/etc/apt/keyrings/docker.asc] \ https://download.docker.com/linux/ubuntu $(. /etc/os-release && echo "$VERSION_CODENAME") stable" \ | sudo tee /etc/apt/sources.list.d/docker.list > /dev/null

Note: These steps follow Docker’s official installation instructions. Find instructions for your distro here.

Install Docker Engine

Now install Docker Engine and its related tools:

sudo apt update && sudo apt install -y docker-ce docker-ce-cli containerd.io docker-buildx-plugin docker-compose-plugin

This installs Docker Engine (the container runtime), the command-line interface, the Docker Compose plugin, and the Buildx plugin for multi-platform builds. The Docker service should start automatically. Check its status with sudo systemctl status docker. If it is not running, start it with sudo systemctl start docker.

You can also enable Docker to start on every boot:

sudo systemctl enable docker

Optional: To run Docker commands without typing sudo every time, add your user to the docker group:

sudo usermod -aG docker $USER

Then log out and back in (or reboot) so the group change takes effect.

Run Your First Docker Container

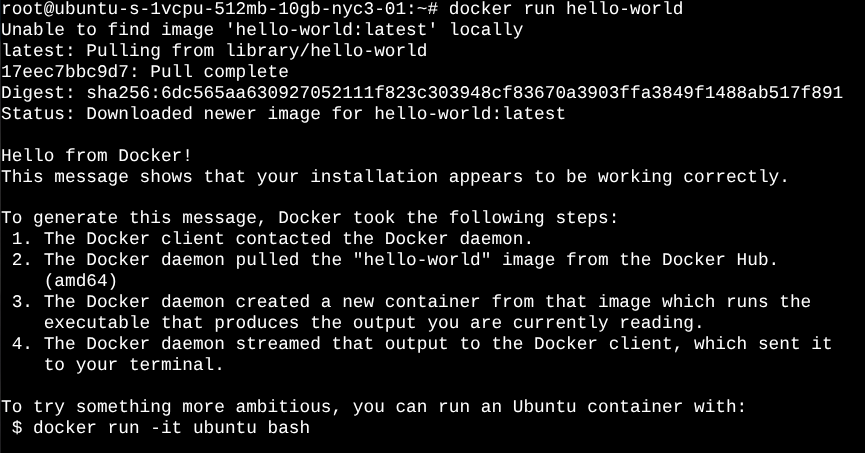

With Docker installed, let’s run a test container. Docker uses images (templates) to create containers. Docker Hub (the default online image registry) hosts thousands of official and community images. A good first test is the hello-world image. In your terminal, run:

docker run hello-world

(sudo is not needed if your user is in the docker group.) Docker will download the hello-world image and run it. You should see a message like “Hello from Docker!” confirming the install.

As the Docker docs explain, hello-world is a test image that prints a confirmation and then exits. For example, after running the command, you’ll see output similar to:

Hello from Docker! This message shows that your installation appears to be working correctly. ...

This means Docker successfully ran the container and printed its message.

You can now inspect containers and images:

docker psshows running containers (there will be none afterhello-worldexited).docker ps -ashows all containers (including stopped ones). You should see thehello-worldcontainer in the list.docker imageslists downloaded images (you’ll seehello-worldthere).

As another quick test, try running an interactive Ubuntu shell container:

docker run -it ubuntu:24.04 bash

This command downloads the Ubuntu 24.04 image (if not already present) and runs bash inside a new container. You’ll get a shell prompt (as root). Inside the container you can run Linux commands (e.g. apt update, ls, etc.) independently of your host. Type exit to leave the container shell; that stops the container.

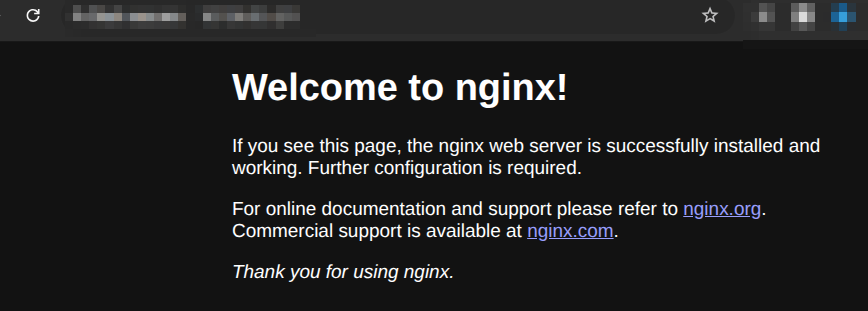

You can also run services in detached mode. For example, start an Nginx web server container bound to port 80:

docker run -d -p 80:80 --name myweb nginx

This downloads the nginx image and runs it in the background (-d), mapping container port 80 to host port 80. In your web browser, enter the IP address of your server to test (or use curl localhost or curl remote_ip), and you should see the Nginx welcome page. Stop it with docker stop myweb.

Congratulations, you now have a working Docker host and have run your first containers!

Docker Compose: Run Multi-Container Services

Running containers with docker run works for quick tests, but most real projects involve multiple containers working together. Docker Compose lets you define and manage multi-container applications using a single YAML file.

Since you installed the docker-compose-plugin package earlier, Docker Compose is already available as docker compose (no separate install needed).

Here is a simple example. Create a file called docker-compose.yml:

services:

web:

image: nginx

ports:

- "80:80"

volumes:

- ./html:/usr/share/nginx/html:ro

This defines a single service called web that runs Nginx and maps a local html folder into the container as the web root. To start it, run:

docker compose up -d

The -d flag runs it in the background. To stop and remove the container:

docker compose down

Compose is especially useful when you need to run an application with a database, a reverse proxy, and other services together. For example, running Nextcloud with a MariaDB database becomes a single docker compose up -d instead of managing multiple docker run commands.

For the full reference, see the Docker Compose file documentation.

Troubleshooting Common Docker Issues

If you run into problems after installing Docker on Linux, here are some common issues and how to fix them.

Permission denied when running docker commands. This means your user is not in the docker group. Run sudo usermod -aG docker $USER, then log out and back in. Verify with groups to confirm “docker” appears in the output.

Docker daemon not starting. Check the service logs with sudo journalctl -u docker.service --since "10 minutes ago". Common causes include storage driver incompatibility or a corrupted /var/lib/docker directory. Restarting the service with sudo systemctl restart docker often resolves transient issues.

Port already in use. If you see “bind: address already in use” when starting a container, another service is using that port. Find it with sudo ss -tlnp | grep :80 (replace 80 with the port in question). Either stop the conflicting service or map the container to a different host port (e.g. -p 8080:80).

Cannot connect to the Docker daemon. If you see “Cannot connect to the Docker daemon at unix:///var/run/docker.sock”, Docker is not running. Start it with sudo systemctl start docker. If the socket file is missing entirely, reinstalling Docker will recreate it.

For a broader troubleshooting framework that applies to Docker and any other Linux service, see Linux Troubleshooting: These 4 Steps Will Fix 99% of Errors.

Docker vs. Podman

Docker is the most widely used container engine, but it is not the only option. Podman is a daemonless alternative that runs containers without a background service and supports fully rootless operation out of the box. Podman uses the same image formats and most of the same CLI commands, so switching between them is straightforward.

If you want to explore rootless containers or need to avoid running a root-level daemon, read the full comparison in Docker Alternative: Podman on Linux.

Next Steps

With Docker set up, you can now explore many Linux server projects in containers. The LinuxBlog.io articles “What’s Next After Installation“ and “DIY Projects for Linux Beginners“ provide great inspiration. For example, you could use Docker to:

- Host a personal website (e.g. Nginx or Apache) in a container.

- Run a media server like Plex or Jellyfin to stream your videos/music.

- Deploy a cloud storage app (Nextcloud/ownCloud) for self-hosted file syncing.

- Set up a VPN or ad-blocking service (OpenVPN, Pi-hole) on your home network.

- Run a database (MySQL/PostgreSQL) container and connect it to your apps.

- Self-host development tools (GitLab/Gitea for Git, Jenkins for CI) in Docker.

- Create a home automation server (Home Assistant) in a container.

- Experiment with containerized monitoring tools. See: Docker Monitoring 101.

- Set up observability for your containers and services. See: Observability: 20 Free Access and Open-Source Solutions.

Each of these projects builds on the Linux server skills discussed in those articles.

Conclusion

Installing Docker on Linux gives you a clean, consistent way to deploy and manage applications without the overhead of full virtual machines. Whether you are running a home server, a lab environment, or a single VM, containers simplify the process of getting services up and running.

Now that your Docker host is ready, pick a project from the list above and try it out. Start with something small, like a Compose file for Nginx, and build from there. The more you experiment, the more natural it becomes.

Nice Article, it doesn’t explain why Docker is better than running those service in a “Linux Jail” or in a VM though. I might try installing it just to play with somethings I’ve been wanting to try, IDK.

Anyways, very well written article.

I haven’t used Docker as yet, but I am in the market for using a couple of containers. I was also doing a comparison between Docker and Podman.

Any thoughts on the differences?

@hydn Great article as always! I enjoy reading what you put together as it is always organized well.

@tmick I do not have much experience with “Linux Jails”, so I wont be able to speak to that, but I use Docker Containers and Docker Compose daily. 90% of the services I run at home are through containers. I have a few VMs still up and running as well.

I personally chose to explore containers more due to an incident I ran into many years ago with my hosted email setup. I use a combination of postfix, dovecot, and opendkim. At the time, I was running them “on bare metal” next to an Icinga2 instance, a Grafana instance, and several other apps.

I was installing updates with apt (sudo apt update && sudo apt upgrade -y), and at the end of the install, postfix was toast. My /etc/postfix directory was mostly empty, and I couldn’t get my email server back up and running (thankfully the actual emails were fine).

I was relatively new to Linux at this time too, so I’m sure plenty of mistakes were made by me that got me into this situation. Maybe I had conflicts in dependencies? Maybe I answered questions from apt wrong? Maybe postfix did an update and I misinterpreted what was happening? Regardless, I decided right then and there, that I didn’t want that situation to happen again.

So why do I use Docker and containers over jails/VMs? Because I wanted to create an environment where one package’s dependencies wouldn’t impact another’s. And I wanted to create a way to quickly “get back” to where I was before a change.

Containers are great for both of those use cases. They are meant to be ephemeral. You can stand them up and blow them away and they should still function the next time you spin it back up. Your config and data lives outside of the container, so you have little risk. I can also easily “transfer” my hosted services to another machine because I can just rsync the configs + data volume to another machine, and spin up a container, and know it will function exactly the same.

Can you do this in a VM? Absolutely! To me, a VM feels too heavy for my use case though. Why spin up an entire operating system and services that requires dedicated high resource allocation by the host when I can get the same with less effort and less resource allocation in a container? Containers are definitely not the end-all-be-all answer, and I’ve definitely seem them used as a hammer when the right tool should’ve been a screwdriver.

I’m guessing you have the experience and knowledge to do something similar with a jail? And I would imagine, based on my limited understanding of jails or chroot, that you could make that function in a very similar way. But for me, containers were the best choice for my use cases. Hopefully my perspective can help answer some of the “why” part of your question!

Your question mostly answered my “why” question. But brings up another one. When you state

Your saying there’s a back-up methodology for them then?

Great question ShyBry747!! I haven’t used either one so I’m no help there.

I haven’t used either one so I’m no help there.